Difference between revisions of "Book/HelloXenProject/1-Chapter"

(→Why and what is Virtualization?) |

(→Why and what is Virtualization?) |

||

| Line 24: | Line 24: | ||

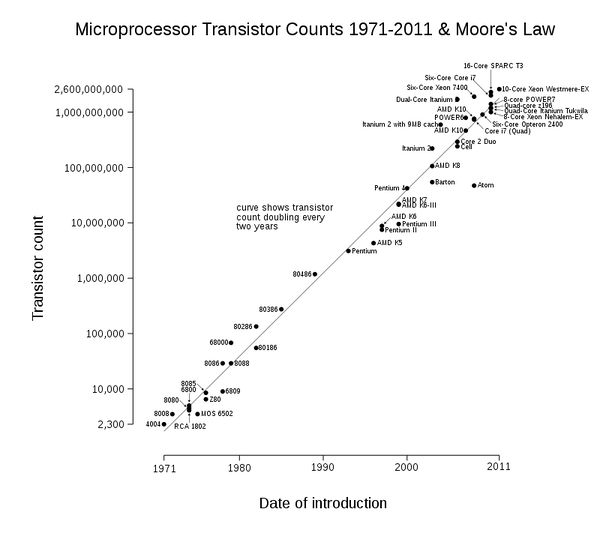

Using Moore’s Law and hardware, Gordon Moore, cofounder of Intel company said: |

Using Moore’s Law and hardware, Gordon Moore, cofounder of Intel company said: |

||

“The number of transistors in a dense integrated circuit doubles approximately every two years.” |

“The number of transistors in a dense integrated circuit doubles approximately every two years.” |

||

| − | This has become a rule to estimate the future of integrated circuits, but some people would estimate it to actually be every 18 months. Please see below picture from [https:// |

+ | This has become a rule to estimate the future of integrated circuits, but some people would estimate it to actually be every 18 months. Please see below picture from [https://en.wikipedia.org/wiki/Moore%27s_law Wikipedia]: |

[[Image:HelloXenProject-1-1.jpg|600px|none|thumb|Figure 1: Microprocessor Transistor Count]] |

[[Image:HelloXenProject-1-1.jpg|600px|none|thumb|Figure 1: Microprocessor Transistor Count]] |

||

Revision as of 07:49, 5 August 2017

Contents

- 1 A Brief History of Virtualization

A Brief History of Virtualization

A Brief History

First of all, this is not the Bible of virtualization, i.e we don't like to speak a lot about everything. This is a look at virtualization with the Xen Hypervisor. In the computing world, when we speak about “virtualization” its mean that you want to create a virtual version of something, this can be a that can be a program, an OS, etc.

The Very Beginning of Virtualization: The Mainframe

Virtualization is not a new technology and in the 1960s, it was used by mainframe computers and made by IBM. Jim Rymarczyk was a programmer that joined IBM in the 1960s as a mainframe expert and he invented virtualization. At that time, IBM used CP-67 software. It was a control program of CP/CMS that was a virtual machine operating system developed for the IBM System/360-67. CP/CMS was a time-sharing operating system that became popular due to its excellent performance. CP-67 was the first of IBM’s attempts to virtualize mainframe operating systems. CP-67 gave customers the ability to run many applications, and was essentially the first “spark” of virtualization. CP-67 was replaced by CP-40, which was an operating system for the System/360 mainframe. The CP-67 was the second version of the IBM hypervisor. The early hypervisor used a conversational monitor system (CSM) that was a simple interactive operating system. The IBM hypervisor become a commercial product in 1972 with VM technology for the mainframe and nowadays it is used as z/VM. The z/VM is full vVirtualization solutions for the mainframe market. The main advantages of using virtualization on mainframes is that we are able to share the overall resources of the mainframe between all users. I will recommend to you to look at Unix OS history. Unix OS is an example of virtualization at the end user level, and will give you a go understanding of the first steps towards application virtualization.

Application Virtualization at Sun Microsystems

Now application virtualization is important because it allowed for (please add why this is important). It began in 1990 when, Sun Microsystems started a project called “Stealth”. It was a project for preparing a better way to write and run applications. The name of the project changed many times and finally in 1995 sun Microsystems rename it to “Java”. As you know, the Internet contains a lot of computers, and each of them run different operating systems, and they must find a way of running rich application on all OSes Java was a solution for this problem. Java let the developer write applications that run on all OSes via Java Runtime Environment (JRE). You just need to install JRE and your program will be run without any problem. The JRE is composed of many components like Java Virtual Machine. When you run a Java application, then your application runs inside of the Java Virtual Machine and you can consider it as a very small OS. For more information, you can see: “https://en.wikipedia.org/wiki/Timeline_of_Virtualization_development”.

Why and what is Virtualization?

We spoke about the history of virtualization, but lets define it more on the technical side So, why is virtualization important and why should you use it?

Using Moore’s Law and hardware, Gordon Moore, cofounder of Intel company said: “The number of transistors in a dense integrated circuit doubles approximately every two years.” This has become a rule to estimate the future of integrated circuits, but some people would estimate it to actually be every 18 months. Please see below picture from Wikipedia:

We must accept that hardware becomes cheaper and cheaper and advanced and more advanced. Compare your old computer with your current computer. What you see? For example, my old computer had 256MB Ram with Pentium 4 1.7 GHz but my current computer is Intel Core i7 with 8GB Ram. As you see, Hardware becomes faster and faster and cheap also, But Are you using all hardware capacity always? I bet most of your CPU and Ram is unused and just use Energy. What should we do? It's just a PC, but how about Servers? Servers using more energy and need extra equipment for keeping. Nowadays, machines just using 10 or 15 percent of their capacity and in the other word, 80 to 95 percent of machine power is unused. Remember Moore’s Law, after two years we have a more powerful hardware but for what? We can't use all hardware capacities and most of our equipment wasted. The Virtualization can solve our concerns. We can use all of our hardware capacity. As we said, IBM inspires VMware for creating Virtualization for x86.

In the computing world, x86 Virtualization means hardware Virtualization for x86 architecture. This technology allows multi OS use x86 processor resources in a safe and efficient cage. Early, x86 Virtualization was a complex software technology because it must fill the lack of hardware Virtualization but in 2006, Intel and AMD companies introduces limited hardware Virtualization with the names Intel (VT-x) and AMD (AMD-V). Both of them allow Virtualization.

The AMD-V was the first AMD generation Virtualization that developed under the code name "Pacifica" and the company introduced it as AMD Secure Virtual Machine (SVM) but changed it to AMD-V.

In 2006, AMD released the Athlon 64, the Athlon 64 X2 and the Athlon 64 FX and all of them use this technology and were the first AMD CPU generation that support Virtualization. In 2005, The Intel company released two models of the Pentium 4 (Model 662 and 672) as the first Intel processors that support VT-x. In 2015, All Intel CPUs support VT-x and most motherboards Inclusion it in their BIOS.

OK, let me jump to another section.

Types of Virtualization

If you do some research, then you can find different types of Virtualization. Some resource tells you that three main types of Virtualization exist and others tell four types of Virtualization exist. Three types are: client, server, and storage and Four types are: Operating system Virtualization, Server Virtualization, Storage Virtualization and Hardware Virtualization.

Let’s us mention something about each of them:

Operating system Virtualization or containers :

Client Virtualization refers to Virtualization on a Desktop or Laptop computer. OS Virtualization means the movement of the main Desktop OS in a virtual environment. In this method, The OS is hosted on a server and in the other words, One version on the server and copy of that is present on each user.

The user can modify his/her own OS without other users being affected. Containers can help you about moving an application from one computing environment to another and the Kernel of OS will be run on hardware with several isolated guest virtual machines. We said containers “isolated guests”. Popular containers are Docker, VagrantUp and LXC. Containers can help you about overhead and performance. The big problem in containers is security.

Server Virtualization :

Server Virtualization means moving a physical server into a virtual environment. This kind of Virtualization will solve the concerns in Data Centers. Nowadays, Servers can run more than one server simultaneously that help you about reducing the number of servers. IT companies very like it because they can gain more control of growing their server farms. Server Virtualization is critical for IT companies because with Server Virtualization they can add more machines and if they can't add more machines then they can't respond to customers needs, So they can't prepare necessary resources and they will be fail. Server Virtualization is very popular in the Web hosting and Databases and have many benefits because each server can run its own OS and rebooting each server can't affect on other servers.

Storage Virtualization :

Storage Virtualization means combining multiple physical HDD into a single virtualized storage. Another name for it is Cloud. The Cloud can enable better functionality and features. Storage Virtualization can help Administrator about Backup, archiving and recovery and administrator can do these tasks easily. This technology can be private, Public and mixed. Private is hosted by your company, Public is out of your company like “Drop Box”, “Microsoft One Drive”, “Amazon S3” and Mixed is a combination of both. In Cloud storage, Data stored in logical pools and physical storage and physical environment owned by the provider. The biggest responsibility of provider is that the Data must be available and accessible and physical environment must be protected and running always. Customers buying these storage and store their users, organization and important data. This technology have a lot of advantages like that companies just need to pay money for space during a month. Companies can save energy, Data availability and protection is better and This storage can be used for copying VM images or import them. Storage Virtualization have a better backup because of your data copied in different location around the world.

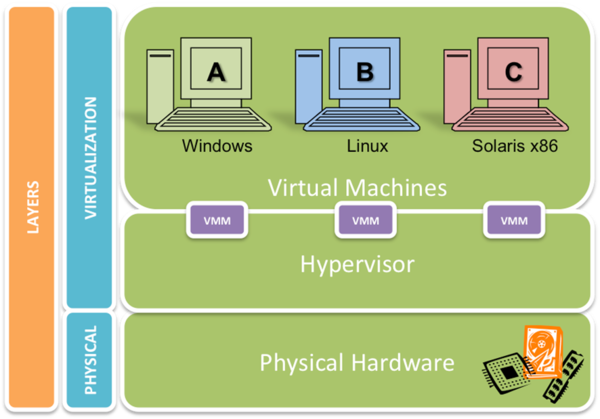

Hardware Virtualization

Hardware Virtualization means taking the components of a real machine and making them virtual. Another name for it is “platform Virtualization” that refer to creating a VM that behave like a real computer with an OS. Softwares that running on these kind of Vms is separated from Hardware resources because Virtualization hides the physical characteristics of users. For example, You can run Microsoft Windows 10 on a Linux machine or vice versa. Microsoft Windows 10 that running on a VM can't understand that it is Virtualized and thinking that it is a real machine. The software that creates a VM on hardware called a hypervisor or Virtual Machine Manager. The software is separated from Hardware resources.

Different Types of Hardware Virtualization exist :

- Full Virtualization

- Partial Virtualization

- ParaVirtualization

In “Full Virtualization”, The VM simulates hardware in a way that the Guest OS does not require any modification to run. In "Partial Virtualization”, The VM simulates multiple instances of hardware and this mean that the entire OS cannot run in the VM. This kind of Hardware Virtualization is important because of address space. In “ParaVirtualization”, The VM doesn't need any Hardware simulation, but offer a special API that can modify the Guest OS. As you see, OS modification is needed thus OS source Code must be Available. This technology introduced by the Xen Project team. It is so useful because don't need any Virtualization extensions on Host CPU and enable Virtualization on Hardware that do not support Hardware-assisted Virtualization.

Virtualization and Security

In the virtualization world, You can make a VM and convert and isolate the VM from the Host . For example, when you launch a Virtual network between VM’s and use Virtual HDDs for testing and forensics.

First of all, The Virtualization add additional layers of complexity and therefore monitor and find security vulnerabilities become more difficult. A hacker must do more research in order to discover more vulnerabilities. Virtualization can provide Isolation and it is the core feature of network virtualization. A network that is Virtualized is isolated from other Virtual networks and also physical networks. The important thing is that no Firewall, ACLs and… required for this isolation. Virtual Networks are isolated from physical infrastructure and it is because of traffic between hypervisors is encapsulated and our physical network operates in a different address space. A good example of it is that our network can be IPv6 and our Virtual network can be IPv4 or vice versa. As you see, its protect our underlying physical network from attacks. In networking we have a concept by the name of “Network Segmentation”. Its mean that you can split a computer network into subnetworks and each of them is a network segment. Network segmentation can improve security and performance. It provides security because when an attacker gain access to your network, Segmentation provide a good control for limit access to the network. This can implement by hypervisor switch or Open vSwitch.

You should keep in mind that these features can make some mistakes. For example, Securing a Virtual machine is same as a Physical machine and if you configure your VM in a bad way, For example, With open unnecessary ports then your VM can be at risk. Another mistake is about Virtual Network and if you host your vital data or databases without segmentation then...

Fortunately, Xen provides a good security feature that we will talk about it in the future.

A good example of an OS that created for security via Xen is “Qubes-OS”. For more information about this project see “https://www.qubes-os.org/”.

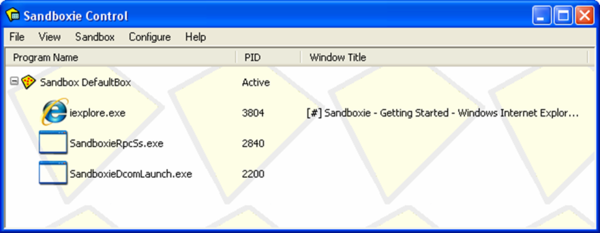

Another good example is “Sandbox”. The Sandbox is a mechanism for separating running programs. You use can Sandbox for executing untrusted code or program from untrusted users and websites. Sandboxes are a good example of Virtualization that running suspicious program without any harm to the host device.

Sandbox

A good example of sandbox implementations is “SELinux”, “Apparmor”, “Virtual machine”, “JVM”, “Sandboxie” and some features in the browser like “Chromium”. Sandboxie is an isolating program that developed by the Invincea Windows OS. It allows users to run and install applications without modifying your drive:

You can download it from “http://www.sandboxie.com/”.

For Sandboxing under Linux see “Mbox” at “https://pdos.csail.mit.edu/archive/mbox/”.

As you understand, Virtualization have some advantage and disadvantage and with the passage of time Hackers and malware authors working on it and found some ways to bypass it. A good example of this es is “Paranoid Fish”. You can see more information about this project at “https://github.com/a0rtega/pafish”.

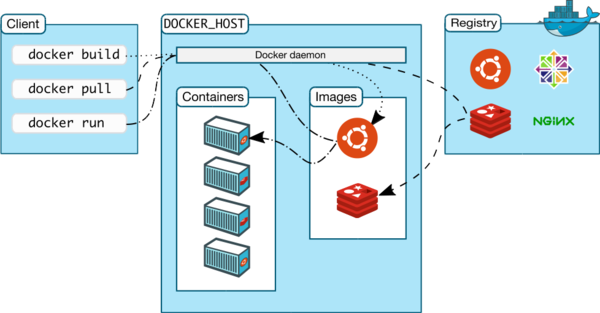

Containers vs Virtualization

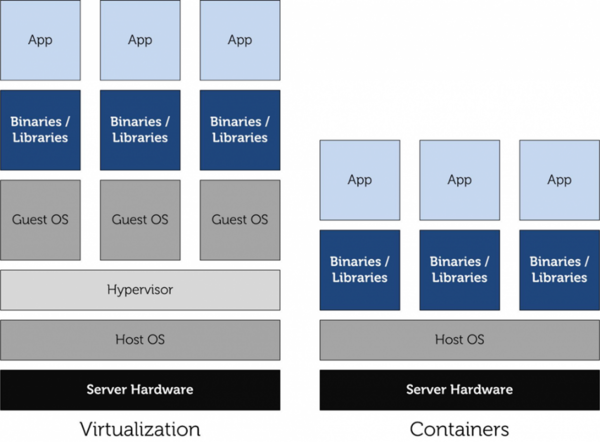

The next topic is about Containers. If you remember we told you something about “Operating-system-level virtualization” but we want show you that this technology is various from Virtualization.

As we said, Container is not a new technology and Unix used it many years ago, but some nowadays technologies like “Docker”, “Vagrantup” and “LXC” make this technology alive and hot. In 2004, Docker team to contribute from other companies like Canonical, Google, Red Hat and Parallels create a standard that allows containers to work within Linux namespaces and control groups without any admin access and offer a better interface for all Linux Distro. This allows many containers to run in a single VM. Before it, you must use a VM for each application and separate them from each other, but now, You don't need it and you can run all of them in one VM environment. Thus, You don't need many Vms on a machine. A big problem with VM was overhead and Containers solved it. Containers solved a problem that System administrators and developers faced with it for many years. They produced a tool, but can't run on some environments because of version mismatch of any library or some packages not installed. Docker, Solved this problem via making an image of an entire application, with all its dependencies and move it to your target environment and your App can work everywhere. What do you think? I guess you are thinking that you can solve this problem via Vms too. Taking an image of an entire virtual host and launching it on the target. Containers are so light weight and your Apps is ready in few seconds. Containers with all the advantages have disadvantages too, and one of the biggest problems with them is “Security” and it is a huge problem for Cloud environments. The containers share the same hooks into the kernel and it is a problem because if any vulnerabilities exist in the Kernel then an attacker has a way to get into your containers. Until now, Containers can't provide a secure boundary like Vm’s. If you do some search about Docker vulnerabilities, then you can find some interesting topics. For example, A vulnerabilities in Docker let attackers to escape the system and gain full access to the server. A tool like “Clair” (https://github.com/coreos/clair) can help you about analysis of vulnerabilities in apps and docker containers. For more information about Docker security you can look at “http://www.cvedetails.com/product/28125/Docker-Docker.html?vendor_id=13534” and “https://www.blackhat.com/docs/eu-15/materials/eu-15-Bettini-Vulnerability-Exploitation-In-Docker-Container-Environments-wp.pdf”.

Another problem for containers is scalable. Five security concerns when using Docker are : # Kernel exploits

- Denial-of-service attacks

- Container breakouts

- Poisoned images

- Compromising secrets.

The main idea behind a hypervisor was to emulate the underlying physical hardware and create Virtual Hardware for you,You can install your OS on top of these virtualized hardware. In below Diagrams you can find the different between Containers and VM :

As you understand, If you need security, then your option is VM otherwise select containers.

Open Source Linux Virtualization Software

Now, the time has come and we want to look at some Virtualization software and familiar you with them.

Xen

Xen Project, born at University of Cambridge as a research project by Ian Pratt and Simon Crosby. Ian Pratt is co-founded XenSource and the first version of Xen made in 2003. Xen Project supported by XenSource Inc and in October 2007, Citrix company bought XenSource, Inc. Citrix bought Xen but continuing support of Free version of Xen and also sell an Enterprise version of it as “Citrix XenServer”. Citrix company using Xen brand on other products that not have any relationship to Xen, For example, "XenApp" and "XenDesktop". Xen changed a lot and in 15 April 2013, The Xen Project moved under the auspices of the Linux Foundation and “Xen” changed to “Xen Project” and differentiated from older name. With this changed, project members like Amazon, AMD, Bromium, Cisco, Citrix, Google, Intel, Oracle, Samsung and … continued support of the project. If you remember, We said something about Xen Project before, but we want to say more but not diving into it now.

Xen Project is a hypervisor that management CPU, Memory and other hardware for All Virtual Machines and most privileged domain. In Xen Project terms, Refer to Vms as “domains” and privileged domain as “dom0”. The dom0 is the only Virtual Machine that has direct access to hardware. From dom0, The hypervisor can manage and domU (unprivileged domains) can be launched.

The dom0 is a version of Linux or BSD and domains can be other OSes like Microsoft Windows.

The Linux Kernel from version 3.0, Inclusion supports of Xen for dom0 and domU in the Kernel. Xen Project can support live migration for Vms and also support load balancing that prevention downtime.

Load balancing, Distribute workloads across multiple computing resources, such as computers, clusters, network links, CPUs and Disks. Load balancing increases reliability and availability through redundancy.

We spoke something about types of Virtualization and Xen can support five types of Virtualization : HVM, HVM with PV drivers, PVHVM, PVH and Paravirtualization.

KVM

KVM or Kernel-based Virtual Machine is a Virtualization for Linux Kernel that turn Kernel into a hypervisor and Rise up from kernel version 2.6.20. KVM need a CPU that support hardware Virtualization. If you remember, We spoke about it (Intel VT-x or AMD-V). In KVM, The Linux Kernel act as a hosted hypervisor (Type 2 Hypervisor) that is a Virtual Machine manager that installed as a software on an existing OS. KVM, simple management and improving performance in Virtualized environments. KVM, Create a VM and coordinates CPU, Memory, HDD and other hardware equipment via the host OS for our VM.

KVM can support a wide range of OS like Linux, Windows, Solaris and even OS X. A modified version of QEMU can use KVM for run OS X. KVM, don't do any emulation, It uses /dev/kvm interface that a is a userspace for :

- Setup address space for guest VM.

- Creating a Virtual Machine.

- Reading and writing VCPU registers.

- Inject and interrupt into a VCPU.

- Running a VCPU.

For BIOS, KVM uses SeaBIOS. It is an Open Source implementation of a 16-bit x86 BIOS that support standard BIOS features.

You may ask yourself, What are KVM benefits? I will show you something :

Security : Because, KVM built on top of Linux kernel, then it can use capabilities of Selinux. With this benefit, KVM can provide Mandatory Access Control security between virtual machines.

QoS : As we said, KVM is part of the Linux Kernel thus a VM have not any different with another program that running on Linux thus administrator can define thresholds for CPU, Memory and...and guaranteeing QoS for Vms.

Open Source : KVM is an open source solution that provides Open source benefits and make interoperable solutions available. As you guess, New hardware features and support for the new generation CPU architectures can fix in it. For example, 64-bit ARMv8 architecture targets the server and mobile platform and KVM support it, Thus, KVM-on-ARMv8 is a key virtualization technology for many markets.

Other benefits are Full Virtualization and Near Native Performance.

With these advantages, KVM has some disadvantage too. For example, Complex Networking, Limited Processors and CPU Virtualization Support.

You can find a good Performance benchmarks about Xen and KVM at “https://major.io/2014/06/22/performance-benchmarks-kvm-vs-xen/”.

KVM acquired by Red Hat in 2008.

Below is a figure from Wikipedia about KVM architecture :

OpenVZ

OpenVZ (Open Virtuozzo) is a technology for operating system-level virtualization in Linux that allow you to run multiple isolated OS on a server. It is a container like LXC. OpenVZ can't prepare full Virtualization like Xen Project and it's just a path for the Linux Kernel and can run only Linux. It is very fast but have a big disadvantage and it is that OpenVZ shares the same architecture and Kernel version. OpenVZ Virtual Machines are jailed containers and are not true Vms like Xen Project. OpenVZ improved and added a good feature. In old version of OpenVZ, Each virtual environment uses a same file system that isolated by “chroot” but in the current version of OpenVZ each container has its own file system.

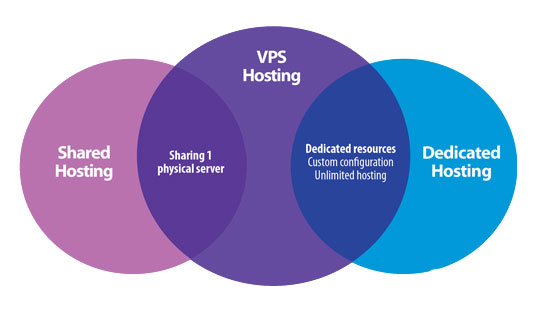

A good advantage in OpenVZ is that memory allocation is soft, This means that a memory that's used by a virtual environment can't use by others. I guess you heard “ virtual private servers (VPSs)”. OpenVZ called VPS too, and in the computing world, The VPS is a VM that sold as a service. A VPS running its own OS and customer has full access to it and can install anything on it. VPSs in some ways are equal to dedicated servers, but their prices are so lower than dedicated servers. VPSs performance compared with dedicated servers are much lower because of they use shared hardware. I found a diagram about it and you can see it below :

In April 2006, two good features for OpenVZ released and they are “live migration” and “Checkpointing”. With live migration in OpenVZ you can move a container from one physical server to another without shutdown your container. The checkpointing mean that a container frozen and all of its states saved as a file on disk. Then you can move this file to another machine and restore it.

LXC 2.0 released and if you Google it, then you can find some articles about converting OpenVZ to LXC. For example, “https://pve.proxmox.com/wiki/Convert_OpenVZ_to_LXC”.

You can't run Windows OS on OpenVZ, but I found a trick about it : https://freevps.us/thread-2789.html

Linux-Vserver

The first version of Linux-VServer released in Oct 2001. The Linux-Vserver is like OpenVZ and adding operating system-level Virtualization capabilities to the Linux kernel. It is used for abstract computer resources like File System, CPU, Network and Memory based on Security Contexts and process can't launch DoS attack on others. With Linux-Vserver, you can create many independent VPSs that run on a physical server at full speed and shares hardware. As we said, VPSs are Independent and all services like SSH, Databases, Mail and… can start without or with a very small modification. VPSs are isolated and each VPS has different Authentication. Linux-Vserver like OpenVZ use “chroot” utility for providing security and just can run Linux guests. The Linux-Vserver does not emulate any hardware and the goal of it is isolating applications and this isolation done with Kernel. Linux-Vserver can integrate with Grsecuirty for providing better security. It has some advantage and disadvantage as below :

- Not have any overhead.

- Because of common file system, The Back-Up is easier.

- Networking is based on isolation not Virtualization, So we have not any additional overhead for packets.

- You can run it in a Xen guest.

- Host Kernel must be patched.

- Clustering and Live migration not supported.

- Because of Networking is based on isolation, Each VPS can't create its own internal routing or firewall.

- Additional patch for supporting IPv6.

- When you shut down a guest then the IP is brought down on the host also.

It has other features like “Resource sharing”, “Resource Limiting”, “Good disk scheduling”, “hide packet counters” and…

For more information about Building Guest Systems you can see “http://linux-vserver.org/Building_Guest_Systems “.

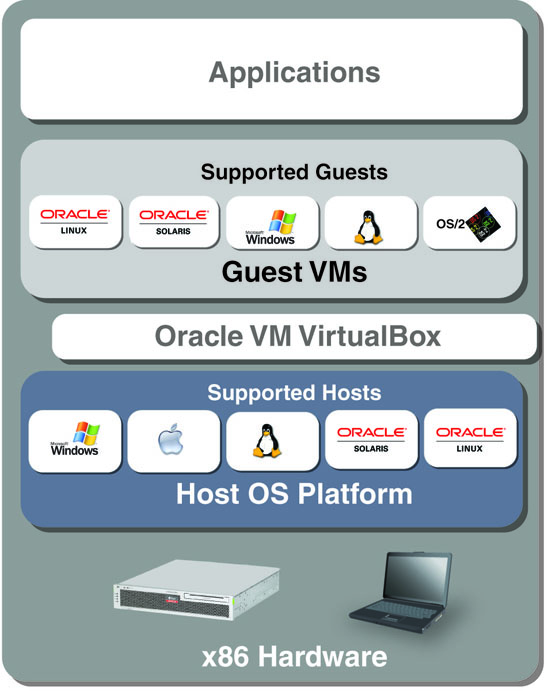

VirtualBox

The first version of VirtualBox released by a German company with the name Innotek GmbH as a Close Source software but free. In January 2007, Innotek GmbH releases an Open Source version of VirtualBox (VirtualBox Open Source Edition (OSE)) under GPL version 2. This company acquired by Sun Microsystems in February 2008 and Sun Microsystems acquired by Oracle in January 2010. When Sun Microsystems bought Innotek company and changed VirtualBox to Sun xVM VirtualBox. The xVM was a product line from Sun Microsystems that addressed virtualization technology on x86 platforms : Sun xVM hypervisor that was a component of Solaris OS and provided the standard features of a Xen-based hypervisor for x86 and Sun xVM Server that based on xVM hypervisor project and the goal of Sun Microsystems from it was Support Microsoft Windows, Linux and Solaris as guest OS. After that Oracle acquired Sun company the Sun VirtualBox name changed to Oracle VM VirtualBox.

VirtualBox or VB can install on many platforms, including Linux, Windows, Solaris, FreeBSD and OS X. VB can support many Guest OS on Linux and Windows platforms. For providing a better performance and graphic resolution, VB use "Guest Additions" package that make VB more powerful. It is a CD-ROM image under .iso format with the name “VboxGuestAdditions.iso”. After installing this package the Guest OS has a better performance and features as below :

- Mouse pointer integration

- Shared folders

- Better resolution and video support

- Seamless windows

- Shared clipboard

If you want to enable some features like “Support virtual USB 2.0/3.0 controller” , “PXE Bootfor Intel card ”, “disk image encryption” and “RDP” then you must use a Close Source pack for VirtualBox with the name “VirtualBox Extension Pack”. It is a file with “.vbox-extpack” extension and easy to install. Just double click on it.

VB provides “Full Virtualization” and if you remember we have written something about this technology. VB has good features and some of them are Experimental Features and we just refer to a number of them :

- 64 bit Guest (hardware virtualization support like Intel(VT-x) and AMD(AMD-V) are required)

- Snapshots.

- Seamless mode

- Command line interaction

- ICH9 chipset emulation

- EFI firmware

- Host CD/DVD drive pass-through

A diagram of VirtualBox Architecture is below :

Compare Virtualization Software

In this section, I want to show you something about different Virtualization software and compare them with each other. We will not cover all Virtualization software, compare but will look at some of the most important :

| Name | Full Virt | ParaVirt | OS Virt (Containers) | Host OS | Architectures | License |

|---|---|---|---|---|---|---|

| Xen | ✓

|

✓

|

✗

|

GNU/Linux, Unix-like, FreeBSD | i686, x86-64, IA64, PPC | GPL V2 |

| KVM | ✓

|

✓

|

✗

|

GNU/Linux, Unix-like | i686, x86-64, IA64, PPC, S390 | GPL V2 |

| OpenVZ | ✗

|

✗

|

✓

|

Linux | i686, x86-64, IA64, PPC, SPARC | GPL |

| Linux-Vserver | ✗

|

✗

|

✓

|

Linux | Everywhere Linux is | GPL V2 |

| VirtualBox | ✓

|

✓

|

✗

|

GNU/Linux, Windows, OS X x86, Solaris, FreeBSD, | i686, x86-64 | GPL V2 |

| Citrix XenServer | ✓

|

✓

|

✗

|

No host OS | x86, x86-64, ARM, IA-64, PPC | GPL V2 |

| VMware ESX | ✓

|

✗

|

✗

|

No host OS | i686, x86-64 | Close Source |

OK.

At the end of this chapter, I want to write something about What is a VM. In the computing world, a VM is an emulation of a Computer. Virtual Machines based on computer architecture work as a real or virtual computer. As we said, Different kinds of Virtual Machines are existing and each of them provides different feature and the ability for us. The physical computer called the “Host” and Virtual Machine called “Guest” and guest OS thinking that it's running on a real computer.

You can manage your Hypervisor and Containers via different GUI and web manager :

- virt-manager (Xen and KVM)

- ConVirt (Xen and KVM)

- Ganeti (Xen and KVM)

- Cloudstack ( Xen, KVM and VMWare)

- phpVirtualBox (VirtualBox)

- XenCenter (Citrix XenServer)

- SolusVM (KVM, Xen & OpenVZ)

- OpenNode (KVM and OpenVZ)

- Xen Orchestra