Xen power management

| To Do:

Note that there are some comments on the Talk:Xen power management page, which need to be merged or considered. |

This document describes the power management feature of Xen, including the CPU P-States(cpufreq), and CPU C-states (cpuidle).

Contents

- 1 CPU P-states (cpufreq)

- 2 CPU C-states (cpuidle)

- 2.1 cpuidle in Xen

- 2.2 Potential side-effects of cpuidle

- 2.2.1 Latency

- 2.2.2 System time/TSC skew

- 3 xenpm - userspace control tool

- 4 Xen 3.4 improvement in power management

- 5 Xen hypervisor clocksource option

- 6 Note about AMD CPUs

- 7 Also See

CPU P-states (cpufreq)

CPU P state (performance state) is one kind of processor power saving state defined in ACPI spec. CPU P state saves power by changing CPU frequency and voltage. Among the P state P0, P1, … Pn, P0 has the highest frequency and thus the highest power consumption. Pn has the lowest frequency and thus the lowest power consumption. Many processors have their own P-state implementations. The EIST (Enhanced Intel SpeedStep® Technology) and AMD PowerNow!™ Technology are typical example.

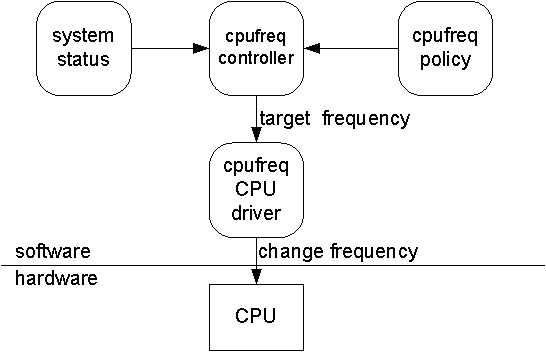

Xen support this feature by cpufreq driver. The logic is similar as commodity OS, i.e. system software periodically measure system status such as CPU utilization, and figure out the appropriate CPU frequency according to the cpufreq policy and current system status, and finally issue platform-depend command to change the CPU frequency. The logic is as Fig 1.

Figure1: cpufreq

For historical reason, Xen has two implementations. The first implementation is domain0 based cpufreq which implements the cpufreq logic in domain0. The second is hypervisor based cpufreq which implements the cpufreq logic in hypervisor. The default is the hypervisor based cpufreq.

Domain0 based cpufreq

Domain0 based cpufreq reuse the domain0 kernel cpufreq code and let domain0 handle the cpufreq logic. Xen hypervisor provides two hypercalls, which are platform hypercall XENPF_change_freq and XENPF_getidletime, to assist the domain0 kernel to get the system status and also change the CPU frequency.

It is simple to enable the domain0 based cpufreq, just add xen boot option "cpufreq=dom0-kernel" in the grub bootloader entry, for example:

title Xen root (hd0,0) kernel /boot/xen.gz cpufreq=dom0-kernel module /boot/vmlinuz-2.6.18.8-xen ro root=/dev/sda1 module /boot/initrd-2.6-xen.img

Notes: Domain0 based cpufreq has one limitation: The number of VCPUs is domain0 must be equal to the number of physical CPUs, and the domain0 VCPU must be pinned to the respective physical CPU.

Hypervisor based cpufreq

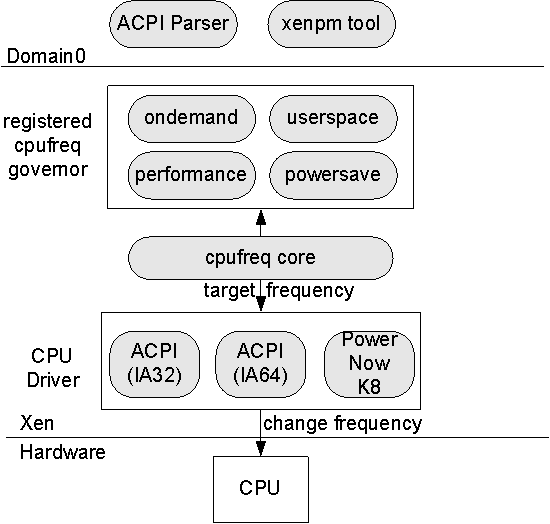

Hypervisor based cpufreq implement most of the cpufreq logic in hypervisor. Figure 2 illustrate the logic:

Figure2: hypervisor based cpufreq

Hypervisor has three components: cpufreq core, cpufreq governor and cpufreq CPU driver. Cpufreq core control the overall logic. Cpufreq governor control the cpufreq policy, i.e. which CPU frequency should CPU goes to. Cpufreq CPU driver issues command to change physical CPU frequency. Xen currently has four governors:

- ondemand: choose the best frequency which best fit the

- userspace: choose the frequency that specified by user.

- performance: select the highest frequency

- powersave: select the lowest frequency

the default governor is userspace governor. Xen also supports three CPU drivers: ACPI (IA32) for Intel x86 processor; ACPI (IA64) for Intel Itanium processor and PowerNow K8 for AMD processor.

Domain0 has two components: ACPI parser and xenpm tool. ACPI parser parses ACPI table and pass the information to hypervisor cpufreq core. Xenpm tool is a userspace cpufreq control tool, which can select cpufreq governor, specify userspace governor frequency, etc. later XenPM section has more details.

Usage: user can use xen boot option "cpufreq=xen" to enable hypervisor based cpufreq. From c/s 18950, it is enabled by default with userspace governor, so no xen boot option is needed after c/s 18950.

xenpm can be used to control cpfureq. Some typical commands are:

- set cpufreq governor

# xenpm set-scaling-governor ondemand|performance|powersave

- get cpufreq P-state status

# xenpm get-cpufreq-states

cpu id : 0

total P-states : 4

usable P-states : 4

current frequency : 800 MHz

P0 : freq [2534 MHz]

transition [00000000000000000003]

residency [00000000000000002668 ms]

P1 : freq [2533 MHz]

transition [00000000000000000000]

residency [00000000000000000000 ms]

P2 : freq [1600 MHz]

transition [00000000000000000000]

residency [00000000000000000000 ms]

*P3 : freq [0800 MHz]

transition [00000000000000000003]

residency [00000000000000000237 ms]

- get cpufreq parameters

cpu id : 0

affected_cpus : *0 1

cpuinfo frequency : max [2534000] min [800000] cur [800000]

scaling_driver : acpi-cpufreq

scaling_avail_gov : userspace performance powersave ondemand

current_governor : ondemand

ondemand specific :

sampling_rate : max [10000000] min [10000] cur [20000]

up_threshold : 80

scaling_avail_freq : 2534000 2533000 1600000 *800000

scaling frequency : max [2534000] min [800000] cur [800000]

CPU C-states (cpuidle)

ACPI defines processor power states as C-states, which include C0, C1, C2, C3, and so on. C0 is the normal working state whereby the CPU executes instructions. C1 ~ Cn are progressively higher power saving states where CPU stops executing instructions and powers down some internal components. The higher the C state, the greater the power savings, and also the greater the wakeup latency. A processor in a C1~Cn state will be woken up by an event (e.g. interrupt) and transition back to C0.

There are several ways to make the processor enter a C state:

- HLT instruction will make processor enter C1 state.

- Reading some I/O port can make the processor enter a different C state. The I/O port number is platform specific and can be retrieved from ACPI table.

- The platform can also provide specific instruction to enter a C state. For example, in Intel processors, the monitor/mwait instruction pair can be used to enter C state.

cpuidle in Xen

Xen also support CPU C-states. The logic can be explained by following two questions:

- When to enter C state: it is quite straightforward. When one physical CPU has no task (VCPU) assigned, it will run idle vcpu, which in turn will put CPU into C-state. When there is breaking event (e.g interrupt) happen, the CPU will be brought out from C state and back to work.

- Which C state to enter: it is more complicated. Deeper C state has more power saving, but also more latency. A good algorithm should balance both power saving and performance. Xen use menu governor to select the deepest C state which also satisfy the latency requirement in the mean time.

How to enable cpuidle: Since most platform support C1 state (i.e. HLT instruction), Xen will make idle vcpu only enter C1 state by default. If the platform supports more C state other than C1, (e.g. C2, C3), user can add xen boot option "cpuidle" to enable the full C state support. Grub entry example is as follow:

title Xen root (hd0,0) kernel /boot/xen.gz cpuidle module /boot/vmlinuz-2.6.18.8-xen ro root=/dev/sda1 module /boot/initrd-2.6-xen.img

Tips: how to check if platform support more C state. After adding "cpuidle" xen boot option, check C state related info in the "xm dmesg" output, for example:

(XEN) cpu4 cx acpi info: (XEN) count = 3 (XEN) flags: bm_cntl[1], bm_chk[1], has_cst[1], (XEN) pwr_setup_done[1], bm_rld_set[0]

The above "count=3" means the platform supports three non-C0 C-states, e.g. C1, C2, C3. If the count=1, that means platform only support C1, probably "cpuidle" option will have no effect in this case.

When cpuidle is enabled, xenpm can be used to view the C state status

# xenpm get-cpuidle-states 0

cpu id : 0

total C-states : 4

idle time(ms) : 66629024

C0 : transition [00000000000013725238]

residency [00000000000000558798 ms]

C1 : transition [00000000000000002263]

residency [00000000000000000018 ms]

C2 : transition [00000000000000311572]

residency [00000000000000296852 ms]

C3 : transition [00000000000013411403]

residency [00000000000066041515 ms]

Potential side-effects of cpuidle

In xen3.4, cpuidle is enabled by default since c/s 19421. But some side-effects may exist under different H/W C-states implementations or H/W configurations, so that user may occasionally observe latency or system time/tsc skew. This section describes the conditions causing these side-effects and the way to mitigate them.

Latency

Latency could be caused by two factors: C-state entry/exit latency and extra latency caused by broadcast mechanism.

C-state entry/exit latency is inevitable since powering on/off gates takes time. Normally shallower C-state incurs lighter latency but less power saving capability, and vice versa for deeper C-state. Cpuidle governor tries to balance performance and power tradeoff in high level, which is one area where we'll continue to tune.

Broadcast is necessary to handle APIC timer stop at deep C-state (>=C3) on some platforms. One platform timer source is chosen to carry per-cpu timer deadline and then wakeup CPUs in deep C-state timely at expected expiry. By far Xen3.4 supports PIT/HPET as the broadcast source. In current implementation PIT broadcast is implemented in periodical mode (10ms) which means up to 10ms extra latency could be added on expiry expected from sleep CPUs. This is just initial implementation choice which of course could be enhanced to on-demand on/off mode in the future. We didn't go into that complexity in current implementation, due to its slow access and also short wrap count. So HPET broadcast is always preferred, once this facility is available which adds negligible overhead with timely wakeup. Then... world is not always perfect, and some side-effects also exist along with HPET.

Detail is listed as below:

For h/w supporting ACPI C1 (halt) only (BIOS reported in ACPI _CST method)

It's immune from this side-effect as only instruction execution is halted.

For h/w supporting ACPI C2 in which TSC and apic timer don't stop

ACPI C2 type is a bit special which is sometimes alias to a deep CPU C-state and thus current Xen3.4 treat ACPI C2 type in same manner as ACPI C3 type (i.e. broadcast is activated). If user knows on that platform ACPI C2 type has not that h/w limitation, 'lapic_timer_c2_ok' could be added in grub to deactivate software mitigation.

For the rest implementations support ACPI C2+ in which apic timer will be stopped:

HPET as broadcast timer source (clocksource) =

HPET can delivery timely wakeup event to CPUs sleep in deep C-states with negligible overhead, as stated earlier. But HPET mode being used does make some differences to worthy of our noting:

- If h/w supports per-channel MSI delivery mode (intr via FSB), it's the best broadcast mechanism known so far. No side effect regarding to latency, and IPIs used to broadcast wakeup event could be reduced by a factor of number of available channels (each channel could independently serve one or several sleeping CPUs). As long as this feature is available, it's always first preferred automatically

- When MSI delivery mode is absent, we have to use legacy replacement mode with only one HPET channel available. Well, it's not that bad as this only one channel could serve all sleeping CPUs by using IPIs to wake up. However another side-effect occurs, as PIT/RTC interrupts (IRQ0/IRQ8) are replaced by HPET channel. Then RTC alarm feature in dom0 will be lost, unless we add RTC emulation between dom0's rtc module and Xen's hpet logic (however,it's not implemented by far.)

Due to above side-effect, this broadcast option was disabled by default in the past. In that case, PIT broadcast was the default. If user is sure that he does not need RTC alarm, then use 'hpetbroadcast' grub option to force enabling it.

The recent improvement is that the legacy mode HPET becomes the default broadcast source if MSI mode is absent. If user try to use RTC alarm, the 'max_cstate' will be automatically limited to C1 (or C2 if giving 'lapic_timer_c2_ok') which doesn't need broadcast, and the legacy mode HPET channel will be disabled to make RTC interrupts available. If user doesn't care the RTC alarm and doesn't want unknown RTC alarm usage to trigger the cpuidle auto degrading, please specify 'hpetbroadcast' grub option.

PIT as broadcast timer source (clocksource)

In the past, if MSI based HPET intr delivery is not available or HPET is missing, in all cases PIT broadcast was the current default one. As said earlier, 10ms periodical mode is implemented on PIT broadcast which thus could incur up to 10ms latency for each deep C-state entry/exit. One natural result is to observe 'many lost ticks' in some guests.

Currently, by default cpuidle will be selectively disabled while HPET is not available. So for system only with PIT, the cpuidle is still disabled by default. If user really wants cpuidle feature with PIT as broadcast source, please specify 'cpuidle' grub option.

Recommendations

So, if user doesn't care about power consumption while his platform does expose deep C-states, one mitigation is to add 'max_cstate=' boot option to restrict maximum allowed C-states (If limited to C2, ensure adding 'lapic_timer_c2_ok' if applied). Runtime modification on 'max_cstate' is allowed by xenpm.

If user does care about power consumption w/o requirement on RTC alarm, then always using HPET (specify 'hpetbroadcast') is preferred.

Last, we could either add RTC emulation on HPET or enhance PIT broadcast to use single shot mode, but would like to see comments from community whether it's worthy of doing.

System time/TSC skew

Similarly to APIC timer stop, TSC is also stopped at deep C-states in some implementations, which thus requires Xen to recover lost counts at exit from deep C-state by software means. It's easy to think kinds of errors caused by software methods. For the detail how TSC skew could occur, its side effects and possible solutions, you could refer to our Xen summit presentation:XenSummit09pm.pdf

Below is the brief introduction about which algorithm is available in different implementations:

- Best case is to have non-stop TSC at h/w implementation level. For example, Intel Core-i7 processors supports this green feature which could be detected by CPUID. Xen will do nothing once this feature is detected, and thus no extra software-caused skew besides dozens of cycles due to crystal drift.

- If TSC frequency is invariant across freq/voltage scaling (true for all Intel processors supporting VTx) and all processors within the box share one crystal, please give boot option 'consistent_tscs'. With this option, Xen will sync AP's TSCs to BSP's at 1 second interval in per-cpu time calibration, meanwhile do recover in a per-cpu style, where only elapsed platform counter since last calibration point is compensated to local TSC with a boot-time-calculated scale factor. This global synchronization along with per-cpu compensation limits TSC skew to ns level in most cases.

- If TSC frequency is variant across freq/voltage scaling or boot option 'consistent_tscs' is not specified, Xen will only do recover in a per-cpu style, where only elapsed platform counter since last calibration point is compensated to local TSC with local scale factor. In such manner TSC skew across cpus is accumulating and easy to be observed after system is up for some time.

Recommendations

Once you observe obvious system time/TSC skew, and you don't care power consumption specially, then similar to handle broadcast latency:

Limit 'max_cstate' to C1 or limit 'max_cstate' to a real C2 and give 'lapic_timer_c2_ok' option.

Or, if you care about power, and sure that the processor TSC frequency is invariant and all processors within the box share one crystal, give boot option 'consistent_tscs'.

Or, better to run your work on a newer platform with either constant TSC frequency or no-stop TSC feature supported.

xenpm - userspace control tool

See xenpm command

Xen 3.4 improvement in power management

- Better support to deep C-states with APIC timer/tsc stop

- More efficient cpuidle 'menu' governor

- More cpufreq governors (performance, userspace, powersave, ondemand) and drivers supported

- Enhanced xenpm tool to monitor and control Xen power management activities

- MSI-based HPET delivery, with less broadcast traffic when cpus are in deep C-states

- Power aware option for credit scheduler - sched_smt_power_savings

- Timer optimization for reduced break events (range timer, vpt align)

Xen hypervisor clocksource option

Some people (running AMD based systems) have noticed high ntpd jitter/noise in dom0 when running Xen hypervisor (xen.gz) with "clocksource=hpet" option, but running with "clocksource=acpi" or "clocksource=pit" works fine without jitter for them.

You can check the current Xen hypervisor clocksource being used by running "xm dmesg | grep -i timer" and check for messages like:

"(XEN) Platform timer is 3.579MHz ACPI PM Timer" or

"(XEN) Platform timer is 1.193MHz PIT" or

"(XEN) Platform timer is 14.284MHz HPET".

You can change/set the timer by configuring the grub bootloader and specifying "clocksource=hpet/acpi/pit" for the Xen hypervisor (xen.gz). For example,

title Xen root (hd0,0) kernel /boot/xen.gz clocksource=hpet module /boot/vmlinuz-2.6.18.8-xen ro root=/dev/sda1 module /boot/initrd-2.6-xen.img

Note about AMD CPUs

Xen 3.4 and 4.0 supports power management on AMD family >= 0x10h, but NOT on family 15 (0xf) CPUs.