Xen Project Schedulers

Contents

Overview

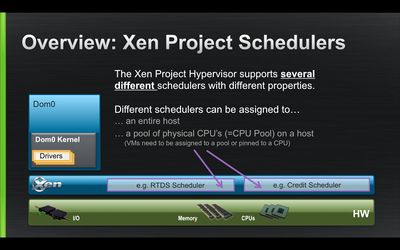

The Xen Project Hypervisor supports several different virtual CPU schedulers, with different properties.

The job of an hypervisor's scheduler is to decide, among all the various vCPUs of the various virtual machines, which ones should run on the host's physical CPUs (pCPUs), at any given time.

It also supports having more schedulers active at the same time, on disjoint groups of pCPUs (see cpupool)

In this case, each pool has its own scheduler. In fact, even if two pools use the same scheduler, this means they're using two completely different and isolated instances of the same scheduling algorithm.

The user interacts with and affects the behaviour of the scheduler by:

- checking or changing a scheduler's global parameters,

- checking or changing a VM's scheduling parameters.

Currently Available Schedulers

The Credit Scheduler

Credit is a general purpose, weighted fair share scheduler, and is the current default.

The Credit2 Scheduler

Credit2 is the evolution of Credit, more scalable and better with latency sensitive workload, while still being based on a general purpose, weighted fair share, scheduling algorithm.

The RTDS Scheduler

RTDS is a real-time scheduler, meant at supporting real-time workloads in the cloud, as well as embedded and mobile virtualization use cases.

The ARINC653 Scheduler

ARINC653 is an embedded (automotive and avionics) real-time scheduler.

Use cases and Support Status

| Scheduler | Use-cases | Xen < 4.7 | Xen 4.8 | Xen 4.9 | Xen 4.12 |

|---|---|---|---|---|---|

| Credit | General Purpose | ✓ Supported ✓ Default |

Supported Default |

Supported Default |

Supported |

| Credit2 | General Purpose Optimized for low latency, scalability, high VM density |

✓ Experimental | ✓ Supported | Supported | Supported Default |

| RTDS | Soft & Firm Real-time Embedded, mobile & automotive Graphics & Gaming in the Cloud |

✓ Experimental | ✓ Improved xl support Experimental |

Experimental | Experimental |

| ARINC 653 | Hard Real-time Avionics, Drones, Medical |

Supported? | ? | ? | ? |

Historical Xen Schedulers

simple Earliest Deadline First (sEDF)

Quoting from sEDF (not any longer) in-tree documentation, "this scheduler provides weighted CPU sharing in an intuitive way and uses real-time algorithms to ensure time guarantees."

The real-time algorithm used was Earliest Deadline First (EDF), although it was modified for being used as a general purpose scheduler too. It could work in both work conserving and non-work conserving modes.

It was introduced in Xen 3.0, and was the default for a while. The scheduler was never properly adapted for dealing with SMP systems and multi vCPUs VMs. Both were working, but behavior and performance were unideal and unreliable. It was eventually removed from Xen 4.6.

Borrowed Virtual Time (BVT)

A virtual time based fair-share, general purpose, scheduler in use in Xen 2.0 and 3.0. Domains's shares of CPU time were determined by their weights. What it is traditionally called quantum, or timeslice, was known there as context switch allowance, and was configurable. It was SMP enabled, but lacked a non-work conserving mode.

Atropos

A soft real-time scheduler, capable of providing guarantees on the absolute shares of CPU time, and allowing using the slack on a best-effort basis. Of course (as it's always the case in RT schedulers) CPU slices were only really guaranteed in absence of CPU over-commitment.

It was in use in Xen 2.0.

Round Robin

It was... well... Round Robin! IT was there as a simple demonstration of Xen's internal scheduler API, not for real production use.

Also See

- sched= boot parameter in Xen unstable boot options

- xl scheduler subcommands

- Category:Scheduler

- Category:Resource Management

- Category:Performance